By REBECCA RUTHERFORD

By REBECCA RUTHERFORD

Los Alamos

For the Los Alamos Daily Post

It started just after midnight Pacific Time on Monday—most of us were likely still half-asleep, relying on devices we trust to wake us, shop for us, secure our homes, keep us connected.

Instead, a massive outage across the internet made it clear just how thin the thread is that holds our digital lives together.

I was trying to check my Ring cam on the front porch about 3 a.m. to check on the pumpkin my kid carved and see if anything was trying to eat it. It wouldn’t connect at all and wouldn’t even display history. I checked downdetector.com to see if there was an outage and sure enough there were tons of reports. I decided the pumpkin would probably be ok and went back to sleep.

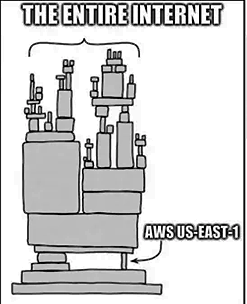

The culprit? Amazon Web Services (AWS). Specifically, its central hub in Northern Virginia, known in tech speak as the US-EAST-1 region. Yikes!

AWS outage meme. Courtesy image

What happened?

At about 3 a.m. ET, multiple services under the AWS umbrella began reporting high error rates and latency—requests failing, pages not loading, alarms not sounding.

Soon the ripple effect became visible:

-

-

-

-

-

-

- Gaming platforms like Fortnite and Roblox going dark.

- Payments apps like Venmo and financial institutions reporting failures in authorization flows.

- Smart home devices (think doorbells, security cameras) going offline or stuck in error loops.

- Even in the UK and beyond banks and government sites were hit.

-

-

-

-

-

By around 9:30 a.m. ET (roughly 7:30 a.m. PT) AWS declared the core issue “fully mitigated”. But the disruption wasn’t simply erased – many services continued to feel the hangover of a clogged system, delayed processing, lingering weirdness. I had issues with Alexa on and off all day; Ring seemed to come back online ok. Many users relying on Alexa for an alarm had a rough morning with missed alarms that never went off.

Why it matters

Because it shows how much of our lives depend on one giant cloud provider. When AWS sneezed, half the internet got a cold. The region in question, US-EAST-1, is among AWS’s busiest – it hosts foundational infrastructure including DNS translation, database endpoints, and computing capacity.

A simplified analogy: imagine if the post office in your city shut down one morning – your mail, your internet orders, your bills, your bank notifications, it all just stopped. That’s what this was like for our extremely internet connected society.

The technical culprit

What has AWS shared so far as to the cause?

- The origin: an “internal subsystem responsible for monitoring the health of our network load balancers” in the US-EAST-1 region.

- The symptom: DNS resolution errors for the DynamoDB API endpoint – basically, the system that maps names to servers stopped translating properly.

- The effect: downstream services couldn’t connect, could not launch new compute instances in that region, and certain queues/registers became throttled.

Good to note: there’s no indication of a cyber-attack or malicious intrusion. This appears to be a failure of internal infrastructure. Danny the intern cut the cord again?

What it means for you

- For users: If your doorbell app didn’t work. If your gaming streak was broken. If you couldn’t log into your bank. You weren’t alone – everything was broken on and off during the night and day!

- For businesses: If you rely on cloud services for your website, your app, your back end, this was a reminder: centralize too much and you’re vulnerable.

- For the internet ecosystem: It reignites debate about concentration of cloud power; when one provider stumbles, the dominoes fall. Experts are already saying that this needs to be a wake-up call.

What’s next

- AWS says they will publish a Post-Event Summary detailing the root cause, timeline, and future mitigation steps.

- For users: If you’re still seeing issues (slow loading, errors, weird behavior), it may be backlog-effects – things queued during the outage are still catching up.

- For companies: Time to revisit redundancy plans. Multi-region, multi-cloud strategies may be more cost-effective when the cost of downtime is this steep.

Let’s hope the report after the fact gives some answers and maybe a few lessons on how to build a more resilient internet, as we are reminded how much we rely on the internet and how dangerous that can be.

Editor’s note: Rebecca Rutherford works in information technology at Los Alamos National Laboratory.